About WebCrawler.buzz — Free Web Crawler Online

A free, open-source web crawler online that anyone can use, inspect, and contribute to. Domain crawler built with transparency and trust in mind.

What is WebCrawler.buzz?

WebCrawler.buzz is a production-grade web crawler online and domain crawler online tool that discovers every publicly accessible page on any website. Use it to crawl website online and extract page titles, meta descriptions, HTTP status codes, response times, content types, page sizes, internal/external link counts, redirect chains, and indexability status.

It's designed for SEO professionals, developers, and website owners who need a quick, reliable free website crawler — without installing desktop software, creating accounts, or paying subscription fees.

💡 This is NOT affiliated with the original 1990s WebCrawler search engine. This is an independent, modern, open-source SEO tool built from scratch.

How It Works

Paste any URL into the search bar and the crawler starts a breadth-first scan of the entire domain. It follows every internal link it finds, building a complete map of your site as it goes — no depth limits, no page caps.

For each page discovered, you get actionable data: the page title, meta description, HTTP status code, server response time, content type, word count, and whether the page is indexable. If there are redirect chains, the crawler traces them to the final destination.

When the crawl finishes, you can browse the results in your browser or export everything as a CSV file delivered to your inbox. The whole process runs in the cloud — nothing to install, no account required.

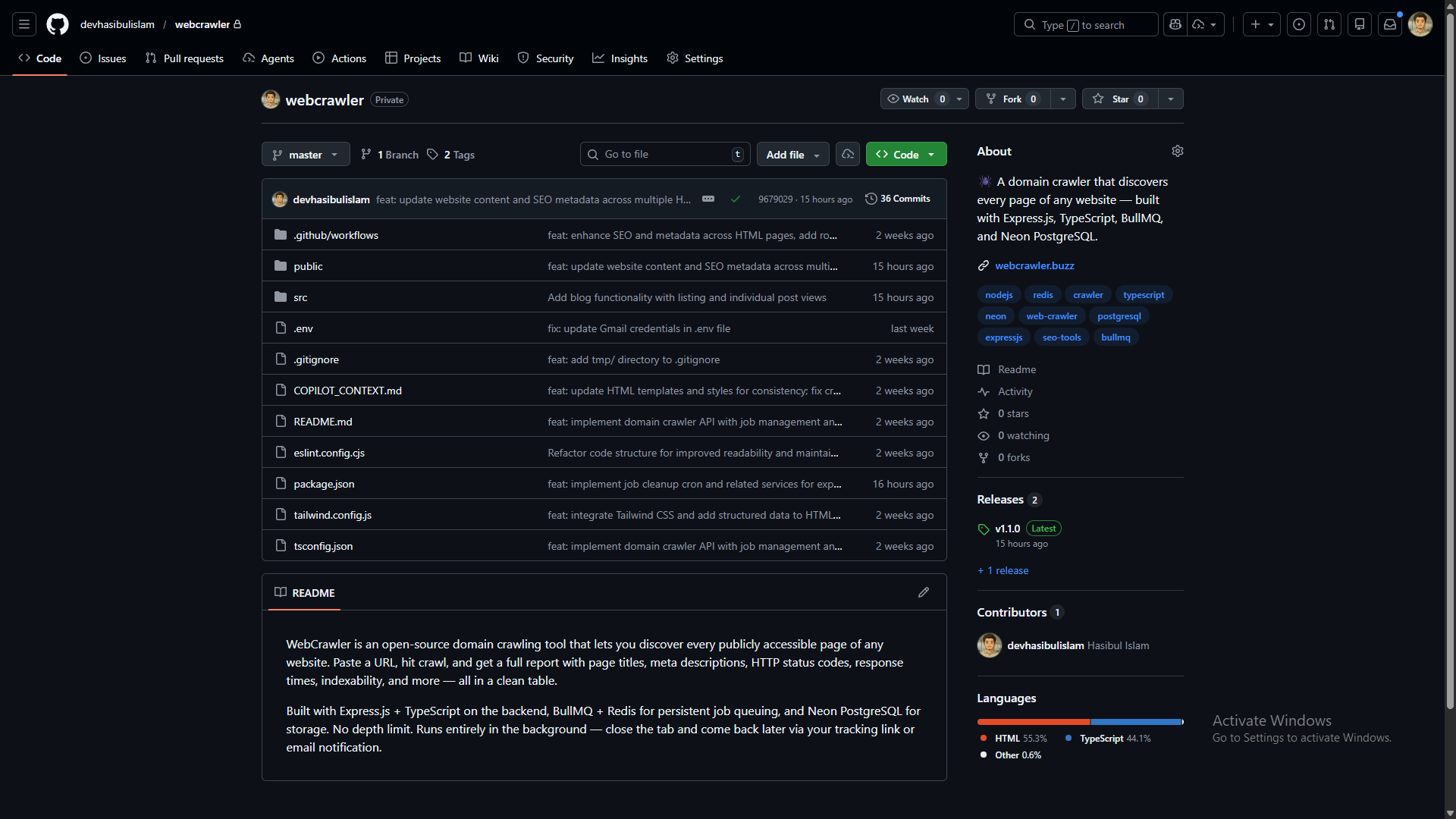

🔓 Fully Open Source

Every line of code is publicly available on GitHub under the MIT License. You can read it, fork it, audit it, or self-host it.

🧱 Tech Stack

Built with battle-tested, production-grade technologies:

🔒 Security & Privacy

We take security seriously. Here's what we do to keep your data safe:

- ✓ Ephemeral data — All crawl results are automatically deleted after 1 hour. CSV exports are deleted after 5 minutes. We don't store your data permanently.

- ✓ No tracking or profiling — We use Google Analytics only for basic page views. We don't build user profiles or sell data.

- ✓ Parameterized queries — All database queries use parameterized inputs. SQL injection is impossible.

- ✓ HTTP security headers — Helmet.js applies HSTS, XSS protection, content-type sniffing prevention, and more.

- ✓ Rate limiting — API endpoints are rate-limited to prevent abuse (200 POST requests per 15 min per IP).

- ✓ Responsible disclosure — Security vulnerabilities can be reported via our security.txt contact.

⚙️ Under the Hood — How the Crawl Pipeline Works

A step-by-step look at the entire process — from the moment you paste a URL to the CSV landing in your inbox.

URL Submission & Validation

You paste a URL and hit "Start Crawl." The Express.js server validates the URL format, extracts the root domain, and checks PostgreSQL for an existing crawl on the same domain. If a recent crawl exists, you're asked whether to re-crawl or use existing results — no wasted work.

Job Creation & Queuing

A new crawl job is inserted into PostgreSQL (status: pending) and immediately pushed to a BullMQ queue backed by Redis. You get a unique tracking URL you can bookmark or share — close the tab anytime and come back later.

BFS Crawl Execution

A crawler worker (concurrency: 5 parallel crawls) picks up the job and runs breadth-first search scoped to the root domain. For every page, Axios fetches the HTML (15s timeout, custom User-Agent) and Cheerio parses it to extract:

- Page title & meta description

- HTTP status code & server response time

- Content type, page size, word count

- Internal & external link counts

- Redirect chains traced to final destination

- Indexability status (

noindex, canonical tags)

Each discovered internal link is normalized, deduplicated, and added to the BFS queue. Each crawled page is atomically inserted into PostgreSQL with counters updated in real time. A 50ms delay between requests keeps the target server safe.

Real-Time Progress Tracking

Your browser polls the GET /api/crawl/:job_id/progress endpoint every 5 seconds. The progress bar shows pages crawled vs. pages discovered — so you always know how far along the crawl is. Job status transitions: pending → running → completed.

Results & Email Notification

Once the BFS queue is exhausted, the job is finalized (status: completed). If you provided an email, a styled HTML notification is sent via Gmail SMTP with a "View Full Results" button. There's a race-condition fix too: if the crawl finishes before you submit your email, the notification fires immediately after you add it.

Paginated Results View

Browse your crawl results in a paginated, searchable table with 50, 100, 500, or 1,000 results per page. Every column is visible: URL, title, meta description, HTTP status, response time, content type, page size, internal/external links, redirect URL, and indexability.

CSV Export Pipeline

Click "Export CSV" and a second BullMQ queue (csv-export, concurrency: 2) processes the request. The export worker fetches all crawled pages from PostgreSQL, builds a 12-column CSV in memory (URL, title, meta, status, response time, content type, size, internal links, external links, redirect, indexable, timestamp), writes it to disk, and sends you a download link via email. Export deduplication prevents duplicate requests within 5 minutes.

Automatic Cleanup

Two scheduled cron jobs run in the background: one deletes crawl jobs (and all their pages) after 1 hour, and another removes CSV export files after 5 minutes. No data is stored permanently — ephemeral by design.

Architecture Flow

👤 Who Built This?

Hasibul Islam

Full-Stack Developer

I built WebCrawler.buzz as an open-source tool to make website auditing accessible to everyone — for free. The entire source code is public on GitHub. If you find a bug, have a feature request, or want to contribute, you're welcome to open an issue or pull request.

📖 API & Documentation

WebCrawler.buzz has a fully documented REST API. You can integrate it into your own tools or use it programmatically.

🌐 Community & Social

WebCrawler.buzz is shared and discussed across developer communities. Follow along for updates, behind-the-scenes, and new features.

Why Trust This Free Web Crawler?

Open Source

100% of the code is public. Audit it yourself.

No Data Retention

Crawls auto-delete in 1 hour. We don't keep your data.

Free Forever

No signup, no limits, no paywalls. Just paste a URL.

Full API Docs

Swagger docs, health checks, public stats.

Product Hunt

Featured and launched on Product Hunt.